MCP gateways have become essential infrastructure for teams deploying AI agents at scale. Without a governance layer between your agents and the tools they call, you’re looking at fragmented security, uncontrolled costs, and zero audit trails. This guide covers five production-ready MCP gateways:Bifrost, Docker MCP Gateway, Kong AI Gateway, Composio, and IBM ContextForge.

The Model Context Protocol has become the standard for connecting AI models to external tools and APIs. Now adopted by OpenAI, Google, and Microsoft alongside Anthropic, MCP defines how LLM applications discover and interact with external capabilities.

But MCP solves connectivity, not governance. Directly connecting agents to dozens of tool endpoints works for demos. In production, without centralized access control, you can’t answer basic questions: Which tools can this agent call? Who triggered that API request? Why did this workflow cost $2,000 overnight?

An MCP gateway centralizes authentication, authorization, auditing, and traffic management into a single control plane. Here are five worth evaluating in 2026.

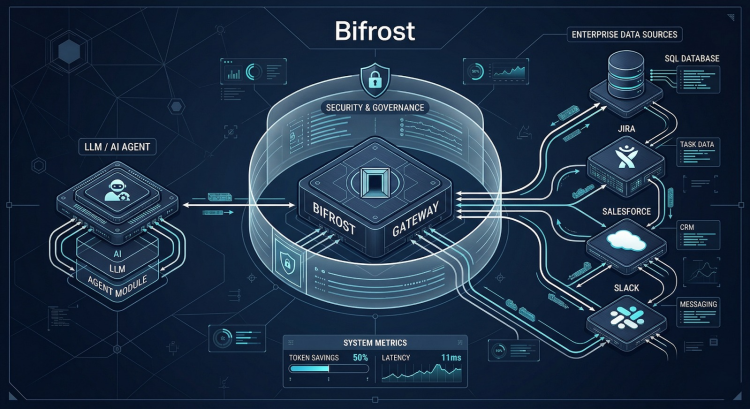

1. Bifrost

Platform overview

Bifrost is an open-source, high-performance AI gateway built in Go that operates as both an LLM gateway and an MCP gateway within a single platform. Production AI agents need both model routing and tool access governance, and Bifrost delivers both through one control plane.

Bifrost acts as both an MCP client and server. As a client, it connects to external MCP servers via STDIO, HTTP, or SSE. As a server, it exposes all connected tools through a single /mcp endpoint that Claude Code, Cursor, or any MCP client can connect to. At sustained 5,000 RPS, Bifrost adds roughly 20 microseconds of overhead, a meaningful advantage in agentic workflows where latency compounds across multiple LLM and tool calls.

Features

- Virtual keys with tool-level scoping: Virtual keys issue scoped credentials per consumer. Each key specifies which tools it can call at the tool level, not just the server level. You can allow filesystem_read but block filesystem_write from the same MCP server.

- MCP Tool Groups: Tool Groups let you define named collections of tools from multiple MCP servers and attach them to virtual keys, teams, or customers. Bifrost resolves permissions at request time with everything indexed in memory and synced across cluster nodes.

- Code Mode: The standard MCP model injects every tool definition into context on every request. Connect 5 servers with 30 tools each, and that’s 150 definitions before the model sees your prompt. Code Mode replaces this with four meta-tools. The model reads only the definitions it needs, writes a short Python script, and Bifrost executes it in a sandboxed Starlark interpreter. The result: roughly 50% reduction in token cost and 30 to 40% faster execution.

- Full audit logging and per-tool cost tracking: Every tool call is a first-class log entry with tool name, server, arguments, result, latency, and the triggering virtual key. Bifrost also tracks cost at the tool level alongside LLM token costs, giving you a complete picture of what each agent run actually costs.

- Unified LLM routing: Multi-provider support across OpenAI, Anthropic, AWS Bedrock, Google Vertex, Azure, Mistral, Groq, Ollama, and more with automatic failover, load balancing, and semantic caching.

Best for: Teams that need MCP tool governance unified with LLM routing, enterprise access control, ultra-low latency, and native observability. Organizations like Clinc, Thoughtful, and Atomicwork rely on Bifrost for production AI infrastructure.

2. Docker MCP Gateway

Platform overview: Docker MCP Gateway is Docker’s open-source solution for orchestrating MCP servers using container-native patterns. It runs each MCP server in isolated Docker containers with restricted privileges and resource limits, managing the full server lifecycle automatically.

Features: Container isolation with cryptographic image signing and supply-chain verification. Profile-based server management for consistency across clients. Built-in secrets management through Docker Desktop. OAuth integration and interceptors for policy enforcement including secret blocking.

3. Kong AI Gateway

Platform overview: Kong AI Gateway extends Kong’s API management platform to support MCP traffic through its plugin architecture. MCP capabilities sit alongside Kong’s LLM gateway, applying the same governance model to AI tool interactions that enterprises already use for API traffic.

Features: AI MCP Proxy plugin for protocol bridging between MCP and HTTP. OAuth 2.1 support via the AI MCP OAuth2 plugin. MCP-specific Prometheus metrics. MCP Registry in Kong Konnect for centralized tool discovery. Full plugin ecosystem (OIDC, mTLS, rate limiting, OpenTelemetry) applicable to MCP traffic.

4. Composio

Platform overview: Composio is a managed integration platform providing an MCP gateway focused on breadth of integrations. With 500+ pre-built managed integrations and unified authentication, it reduces integration burden for teams connecting agents to diverse external tools.

Features: 500+ managed integrations with automatic authentication handling. Unified auth layer across all connected tools. Framework-agnostic support for LangChain, CrewAI, and other agent frameworks.

5. IBM ContextForge

Platform overview: IBM ContextForge is an open-source AI gateway that federates tools, agents, models, and APIs into a single MCP-compliant endpoint with multi-cluster Kubernetes support and auto-discovery across distributed deployments.

Features: Multi-protocol federation across MCP, REST-to-MCP, gRPC-to-MCP, and the A2A protocol. Built-in LLM proxy supporting 8+ providers. 40+ plugins for transports and integrations. OpenTelemetry observability with Phoenix, Jaeger, and Zipkin support.

Choosing the Right MCP Gateway

If you’re running Docker everywhere, Docker MCP Gateway applies familiar container patterns. If you have an existing Kong deployment, extending it with MCP plugins avoids new infrastructure. If you need 500+ integrations fast, Composio gets you there. If you need multi-protocol federation, ContextForge covers the broadest surface area.

If you need your LLM calls and MCP tool calls flowing through the same gateway with unified access control, cost visibility, and audit logging, Bifrost is purpose-built for that. Its Code Mode alone addresses one of the most expensive problems in production MCP: the context bloat from injecting every tool definition into every request.